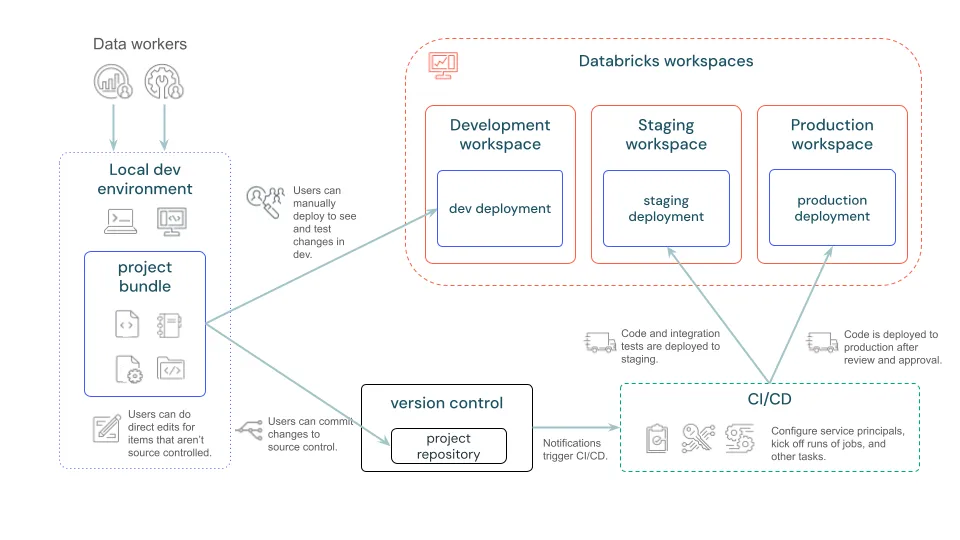

Databricks has become the go-to platform for unifying data, AI, and analytics. But as projects grow, so does the challenge of managing jobs, pipelines, ML models, and infrastructure consistently across dev, staging, and production.

That’s where Databricks Asset Bundles (DAB) come in.

Think of them as the Infrastructure-as-Code (IaC) framework for Databricks—a declarative way to define, version, and automated deploy all your Databricks resources.

What are Databricks Asset Bundles ?

Databricks Asset Bundles are a tool to facilitate the software engineering best practices, including source control, code review, testing, and CI/CD, for your data and AI projects.

A Databricks Asset Bundle is a YAML configuration file that declares the resources your project needs:

- Jobs

- Pipelines

- Volumes and schemas

- etc.

Here’s a simple bundle example (bundle.yml):

bundle:

name: my-sample-bundle

resources:

jobs:

daily-sales-job:

name: "Daily Sales Report"

tasks:

- task_key: run-notebook

notebook_path: ./notebooks/sales_report

compute:

type: serverless

databricks bundle validate

databricks bundle deploy

databricks bundle run daily-sales-job

Get Started

1. Install Databricks CLI (Latest Version)

pip install databricks-cli --upgrade

2. Verify Databricks CLI installation

databricks --version

3. Initialize a Bundle

databricks bundle init

Choose a template (e.g., default-python).

Example Use Case : Multi-Environment Deployment

Folder layout:

├─ databricks.yml

├─ targets/

│ ├─ dev.yml

│ ├─ stg.yml

│ └─ prod.yml

└─ resources/

└─ jobs.yml

databricks.yml

bundle:

name: my-lakehouse

include:

- targets/*.yml

- resources/*.yml

Example targets :

targets/dev.yml

targets:

prod:

workspace:

host: https://adb-222222222222.11.azuredatabricks.net

root_path: /Shared/bundles/my-lakehouse/dev

run_as:

service_principal_name: spn-my-lakehouse-dev

variables:

env: dev

uc_catalog: dev_catalog

targets/prod.yml

targets:

prod:

workspace:

host: https://adb-333333333333.12.azuredatabricks.net

root_path: /Shared/bundles/my-lakehouse/prod

run_as:

service_principal_name: spn-my-lakehouse-prod

variables:

env: prod

uc_catalog: prod_catalog

Shared resources:

resources/jobs.yml

resources:

jobs:

etl_daily:

name: etl-daily-${var.env}

tasks:

- task_key: run

notebook_path: ./notebooks/etl_daily

compute:

type: serverless

task_parameters:

- name: UC_CATALOG

value: ${var.uc_catalog}

Now you can deploy per environment:

databricks bundle deploy --target dev

databricks bundle deploy --target stg

databricks bundle deploy --target prod

Asset Bundles : Key Advantages

- Consistency → Same definition works in dev, staging, and prod.

- Version Control → Store configs in Git, enabling history, pull requests, and rollbacks.

- Governance → Bundles enforce IaC best practices, aligning with enterprise compliance.

- Automation → CI/CD pipelines can deploy automatically from Git

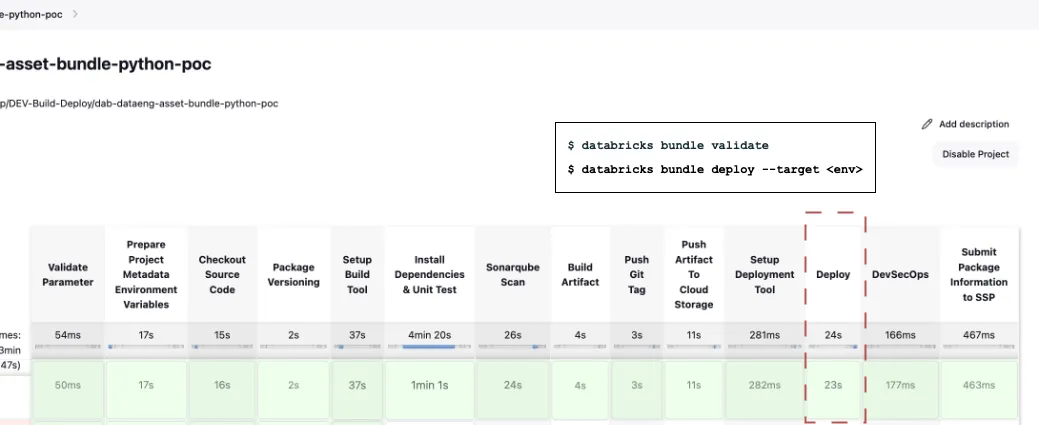

Integrated with CI/CD

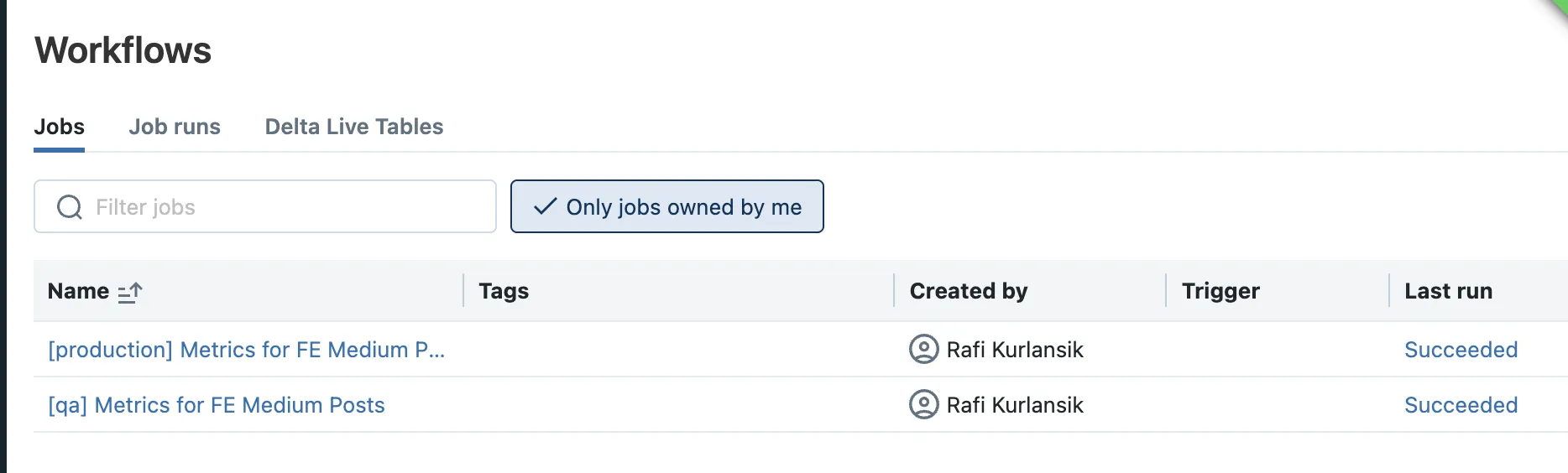

Example of Databricks Job deployed by Asset Bundles

Conclusion

Databricks Asset Bundles bring reliability, governance, and automation to data/AI workflows. You can scale smoothly from dev to prod with the same codebase and only environment-specific overrides.

If your team is still using manual UI configs, Now is the right time to adopt Asset Bundles. They’re the future of CI/CD on Databricks.